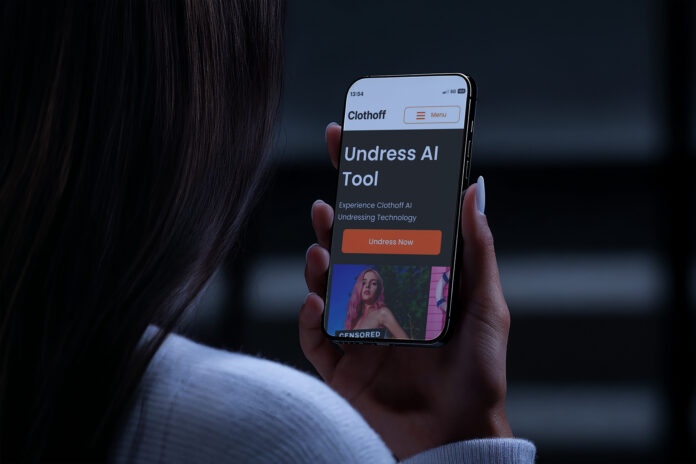

A pair of law-school clinics at Yale — the Media Freedom & Information Access Clinic (MFIA) and the Lowenstein International Human Rights Clinic — working with co-counsel, have brought a federal lawsuit in New Jersey against a website called ClothOff. The website allegedly uses artificial intelligence to create hyper-realistic nude images of real children and adults without their consent.

The complaint states that ClothOff marketed itself to teenagers, encouraged generation of non-consensual nude images, and that its operation and sharing of those images caused serious harm to at least one identified teenage victim, including emotional distress and disruption of her high-school education.

The lawsuit also alleges that the operators of ClothOff used pseudonyms, fake identities, and masking services to evade detection, and that they profit from the website’s operation — one possible lead is that the operators may reside in Belarus.

Why This Matters for the Generative AI / Prompt Community

- This case highlights a growing risk area in generative AI: creating “undressing” or nude deep-fake imagery without consent. As prompt-creators and tool-users, this is a reminder of the ethical and legal boundaries.

- For those building or sharing prompts that generate human likenesses, especially intimate or sexual content, this underscores the importance of consent, source image rights, and responsible use.

- Because the website is being held legally accountable, we may see more litigation and regulatory attention on AI tools that facilitate non-consensual imagery. That may affect policy, platform rules, and prompt-sharing norms.

- From a community-content perspective: there’s a potential new category of “harmful prompt use” that prompt repositories may choose to exclude or flag.

- It shows that the legal system is now catching up with the AI generation layer (not just traditional photo-editing) and that prompt creators should be aware that not all “magic” prompts are risk-free.

Source: Yale Law School