Black Forest Labs has unveiled FLUX.2, a model that marks a decisive shift toward production-ready generative visual intelligence. Rather than positioning the release as yet another model in an already crowded AI landscape, BFL frames FLUX.2 as a tool designed for real creative work: campaigns, branding, visual storytelling, product imagery, and professional-grade editorial content. It is the result of months of iteration following the success of FLUX.1, and the company now describes this new version as a leap, not a step.

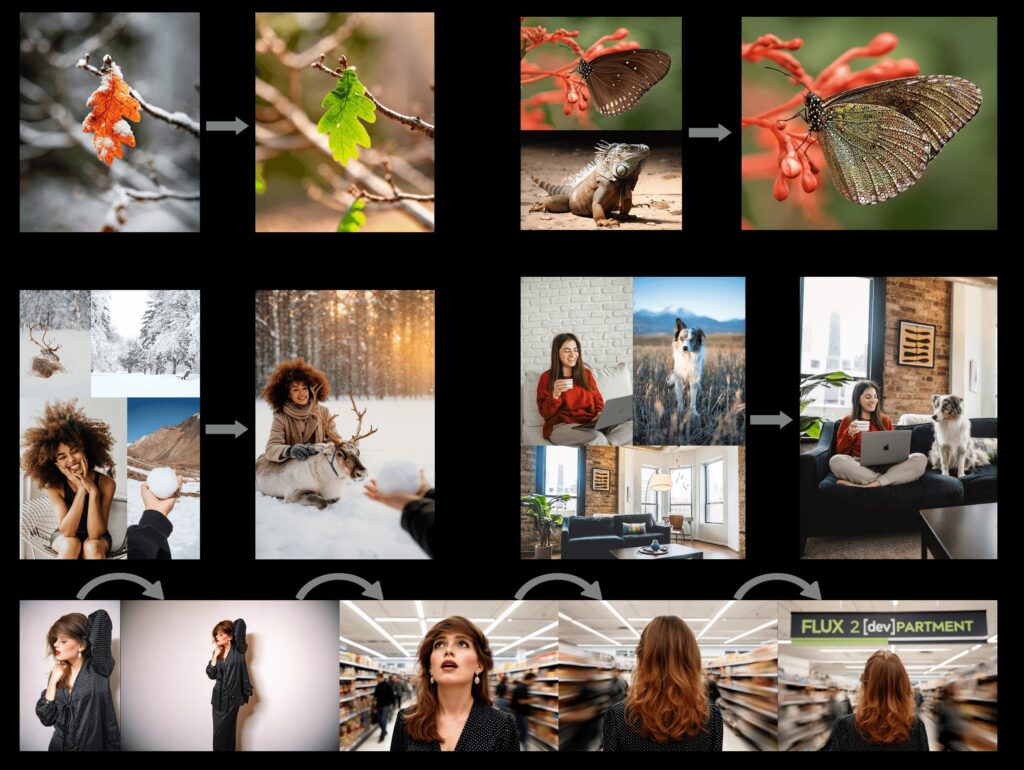

One of the defining features of FLUX.2 is the platform’s renewed focus on consistency and control. The model can ingest multiple reference images at once — as many as ten — and use them to maintain stable identities, products, or styles across a series of new renders. This is particularly valuable for creators who need visual coherence across campaigns or storytelling arcs. Whether working on branded characters, product lineups, or stylistically unified scenes, the model behaves less like a playful generator and more like a flexible creative partner capable of managing complex visual requirements.

Another noticeable improvement is technical fidelity. Textures, skin details, fabrics, lighting, and overall realism reach a level that situates FLUX.2 among the strongest models available today. It can output images up to four megapixels with a clarity that is especially useful for commercial visuals—product photography, lifestyle scenes, portraits, and high-detail environmental compositions. It also demonstrates a surprisingly reliable grasp of text and typography. Posters, UI mock-ups, signage, and editorial layouts, long considered weak points for many image models, are handled with renewed precision, allowing designers to prototype layout-driven content much faster than before.

What distinguishes FLUX.2 even further is BFL’s continued commitment to an “open core” approach. Alongside the professional and highly optimized API models, the company provides an open-weights version for community use. This allows researchers, hobbyists, and developers to study the model or adapt it to their own pipelines. BFL hints at an even more lightweight variant, FLUX.2 klein, which aims to make high-quality generative image tools more accessible to users with limited hardware. The result is an ecosystem where commercial studios, solo illustrators, and experimental developers can all find an entry point.

For creators, FLUX.2 represents a shift toward models that do not merely generate images but support real workflows. Marketing teams can prototype consistent campaign assets without reshoots. Illustrators can maintain stylistic continuity across entire visual series. Designers can test typographic compositions without relying on external tools. Even storytellers can produce coherent sequences featuring the same characters and settings. The model’s ability to understand spatial logic and compositional structure means that prompts no longer need to be endlessly micromanaged to produce reliable output.

Why FLUX.2 matters for creators

For anyone working with generative-AI visuals — illustrators, content creators, marketers, designers — FLUX.2 could be a major leap forward:

• You can create brand-consistent assets across multiple images — product mockups, campaigns, lifestyle photography — without manual compositing or re-shooting.

• It closes much of the gap between “AI generated abstract art / sketch” and photo-quality, professional-headache-free images ready for publication, print, or commercial use.

• For those interested in organized prompt workflows or series of images (e.g. consistent characters for webcomics / storytelling, consistent product visuals), the multi-reference + style consistency is a big win.

In a practical sense, the release is also a reminder that generative visual tools are becoming more integral to the creative process rather than optional embellishments. For anyone involved in digital art, graphic design, illustration, or content production, FLUX.2 feels like a preview of how creative teams may work a year from now.

SOURCE: Black Forest Labs